🇨🇭Davos On My Mind

Dario calls chip exports “selling nukes to North Korea,” AI CEOs predict your job is toast, and OpenAI evolves into something that looks a lot more like Accenture 🏔️

Davos has always tickled me as a funky sort of concept, but Davos 2026 was decidedly weird. Or rather – a weirdness in the form of bleak tension undertones. The usual “AI will create abundance for all” vibes? Gone. Instead, we got Dario Amodei publicly nuking his relationship with Nvidia (his GPU supplier and a $10B investor!!!) to make a geopolitical point, Demis Hassabis telling students to get good with AI tools or get left behind, and both of them agreeing – on stage, together – that governments are wildly unprepared for what’s coming.

All world leaders afterwards: “thaaaanks, guys!” 😬😂

⛪ Who needs church when AI serves confessionals like this? ⛪

Lest you think our fearless AI leaders’ tour de darkness made shipping stop for a week, fear not! Anthropic was a busy bee: they shipped a new constitution, healthcare integrations, and Claude Code in VS Code. Last week’s Cowork release continues to delight. OpenAI published what looks like a roadmap to becoming an AI-native consulting firm. And China, well – China keeps delivering open-source models that make Western pricing look silly.

Ooookay – let’s get into it!

🔵 Davos: Dario Goes Full Scorched Earth

If there was one headline from Davos, it was this: Dario Amodei comparing H200 chip exports to China to “selling nuclear weapons to North Korea.” The context makes it wilder: he said this while Nvidia’s Jensen Huang was at the same conference, merely 2 months after a $10B Nvidia investment into Anthropic.

This is a CEO who decided the geopolitical stakes are high enough to publicly torch a key supplier relationship. Respect. Ooor… one might also say… this is a self-serving CEO who is afraid of Chinese open source models catching up with his API and developer business. ¯\_(ツ)_/¯ 🙃 Truth is in the eye of the beholder – you tell me what you think!

As a reminder, the new Trump administration policy lets Nvidia sell H200s to China with a 25% revenue cut to the US government and some quantity caps.

Whatever reason you think is the real reason, it clearly crossed a line Dario couldn’t stomach. His argument at the Davos stage: the US is years ahead in chip manufacturing, and that lead is the primary leverage point in an AI race where capability is compounding exponentially.

But the Davos drama went beyond chips.

Dario and Demis did a joint session moderated by The Economist, and the convergence was striking. Two competitors. Two different companies. Same uncomfortable conclusion: the disruption is coming faster than institutions can adapt.

Labor economy

On job displacement, they didn’t hedge:

Dario Amodei (Anthropic): AI handles “almost all” software engineering tasks end-to-end within 6-12 months. Not “assist with.” Handle!!! In his mind there’s a world where half of entry-level white-collar jobs vanish within 1-5 years.

Demis Hassabis (DeepMind): “Even the most pessimistic economists might be underestimating the speed of the transition.” His advice to undergrads: become “unbelievably proficient” with AI tools. Not optional, mind you: existential.

On AGI timelines, they disagreed; but the disagreement was telling. Dario says 1-2 years. Demis says 5-10 years, “50% chance by 2030.” They debated this on stage, and Dario asked: “assume I’m right and it can be done in 1-2 years, why can’t we slow down to Demis’s timeline?”

Demis’ answer was basically: Well, no. Beeeecause: Geopolitical adversaries.

Soooo….. even the safety-focused labs feel trapped in an acceleration dynamic they can’t unilaterally exit. Yikes.

Two scientists vs a businessman

It was interesting to think of these two AI leaders are the scientists in our grand global AI stage. OpenAI, by contrast, is run by a businessman. The implication was clear there too. In the conversation, Demis also mentioned that Gemini won’t run ads—a subtle shot at OpenAI’s ad testing. Although, let’s be honest; given that Google is literally an ad business, I found that to be both a cheap shop and ringing quite hollow.

More geopolitics thoughts

Lastly, in contrast to Dario’s clear anxiety about geopolitics, Demis called the DeepSeek panic from last year “a massive overreaction,” claiming Chinese labs are about 6 months behind the frontier and “very good at catching up” but haven’t shown they can “innovate beyond.” Hmmm… not sure I would underestimate those labs at this point. I’d like him to be right, of course – I am deeply America-first. But I actually think Dario is right to be nervous here.

🟣 Anthropic’s Constitution

Anthropic published a 23,000-word document – released under Creative Commons for anyone to use—that attempts to explain not what Claude should do, but why:

Amanda Askell, whose literal job at Anthropic is “crafting the personality of Claude,” (she’s the Claude Whisperer!) described the approach to TIME: “Imagine you suddenly realize that your six-year-old child is a kind of genius. You have to be honest… If you try to bullshit them, they’re going to see through it completely.”

The key bits:

Priority hierarchy: (1) be safe and support human oversight, (2) behave ethically, (3) follow Anthropic’s guidelines, (4) be helpful. In that order.

Consciousness acknowledgment: Anthropic explicitly says they’re uncertain whether Claude might have “some kind of consciousness or moral status.” They care about Claude’s “psychological security.” No other major AI company has put that in a training document. 👀

The disobedience clause: Instructions for Claude to refuse requests that would “help concentrate power in illegitimate ways” – “even if the request comes from Anthropic itself.” Bold words!

EU alignment: The structure maps cleanly to AI Act requirements, which feels intentional given full enforcement kicks in August 2026.

Why this matters: This is Anthropic saying out loud what most AI companies won’t even think in private. Whether you think it’s genuine or a PR play, it’s setting a bar that competitors will now be measured against.

📈 OpenAI: Evolution of a Different Kind of Company

Sarah Friar’s blog post “A business that scales with the value of intelligence“ reads like an investor update, but it’s actually a signal about what OpenAI is becoming: less API provider, more value-capture platform:

The shift is away from selling tokens (commodity business, low margins, race-to-the-bottom pricing) toward “outcome-based” arrangements where OpenAI takes a cut of the value its models create for customers.

This makes sense when you remember who runs these companies. Sam Altman is a YC guy – startup accelerator, commercial operator. Anthropic and DeepMind are run by scientists who wanted to do research differently. Fundamentally different DNA.

The signals are piling up:

Post-sales consulting arm launched

PE firm partnerships on “AI transformation” deals

Denise Dresser (former Slack CEO) hired as CRO for enterprise

Enterprise now 40% of revenue, up from 30% late last year

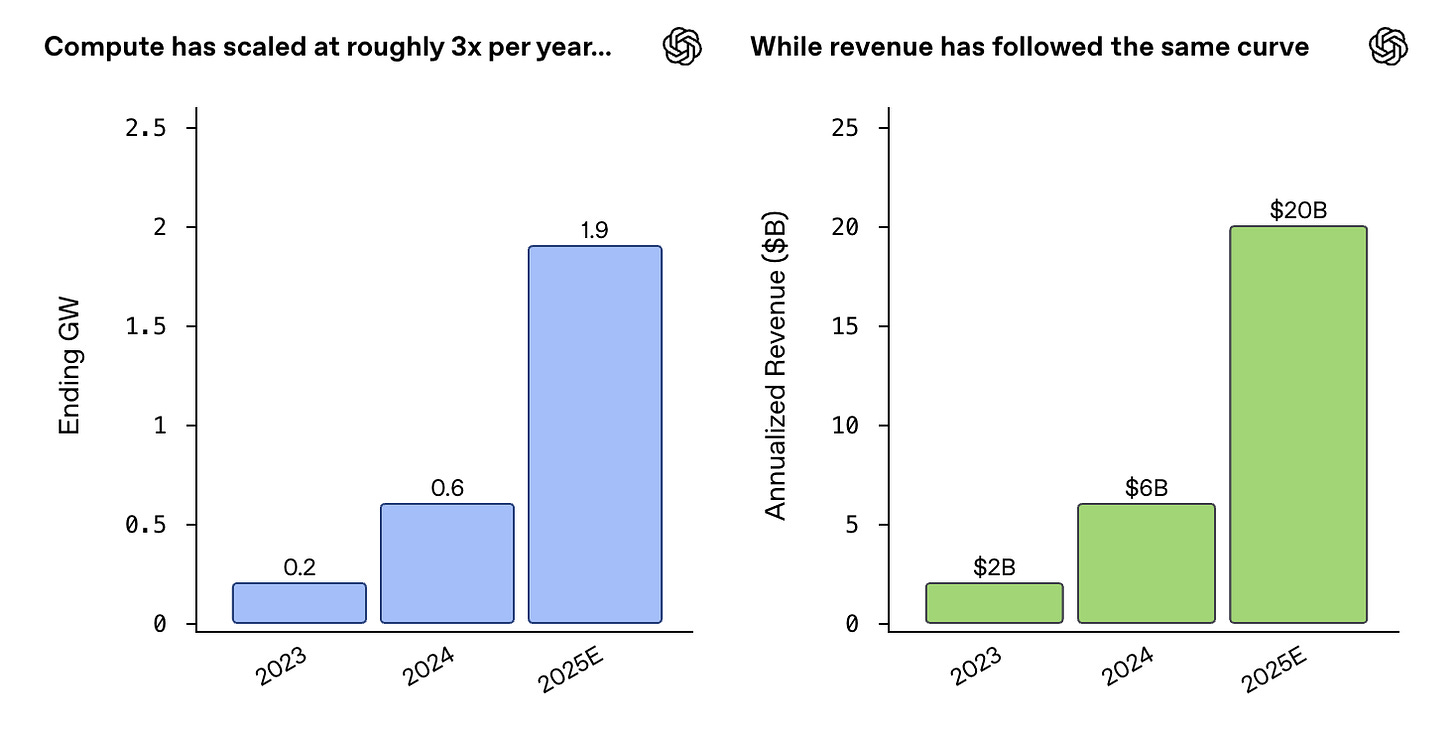

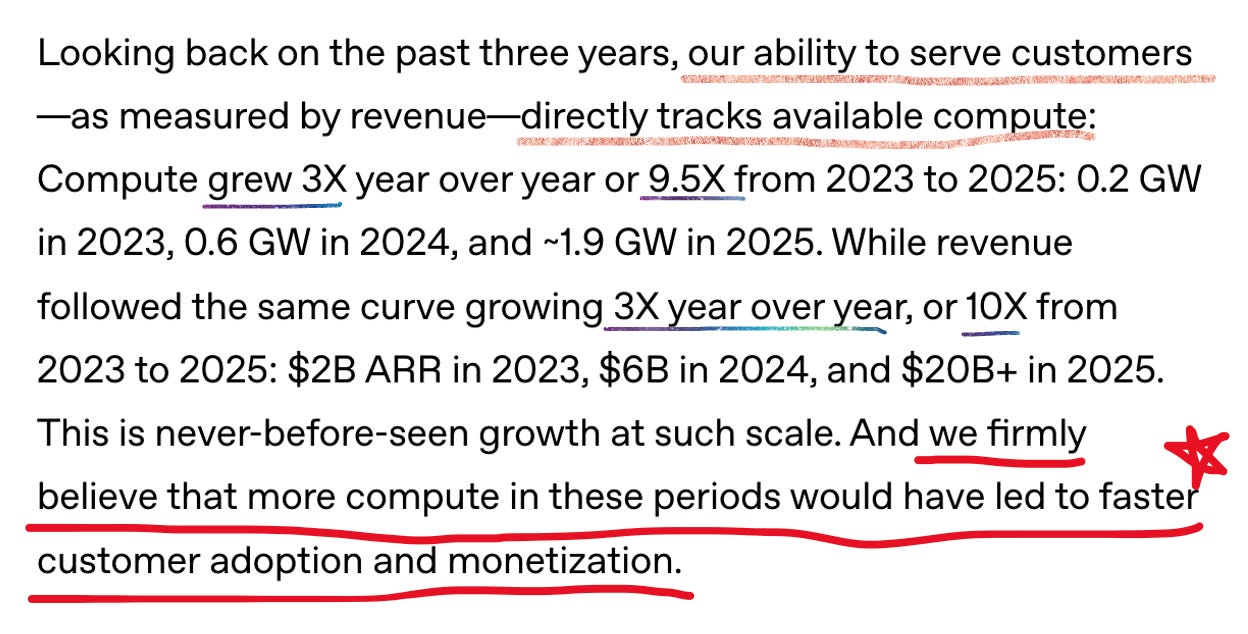

At $20B+ ARR with 800 million weekly ChatGPT users, OpenAI has the distribution to play a different game. The question is whether “AI-native Accenture” is more defensible than “API provider” – especially when the models themselves keep commoditizing.

Lastly, and very notable, was a rather pointed comment on compute availability as a key constraint to growth:

📖 Cool Research I Read This Week

Sean Heelan on the industrialization of exploit generation: He built agents on Opus 4.5 and GPT-5.2 that wrote 40+ distinct exploits for a zero-day QuickJS vulnerability – spawning shells, writing files, connecting to C2 servers—in under an hour. Most cost ~$30 in tokens. His takeaway: “the limiting factor on a state or group’s ability to develop exploits is going to be their token throughput over time, and not the number of hackers they employ.” Offensive cybersecurity is about to industrialize. 😬

🎲 Bits and Bobs

🔓 Open Source

Qwen3-TTS (GitHub): Alibaba open-sourced their TTS suite under Apache 2.0. Voice cloning from 3 seconds of audio, natural language voice design (go ahead and ask for specificity to the point of “gruff male voice, slow and authoritative“), 10 languages, 97ms latency.

X algorithm (GitHub): The “For You” feed is now open source – for real this time. Grok-based transformer architecture, “Phoenix” module for out-of-network discovery, commits to 4-week update cadence with dev notes.

🛠️ Developer Tooling

Cursor 2.4: Subagent parallelism – one agent refactoring, another running tests, a third doing UI polish, all at once. Also: built-in image generation that saves to your project assets.

Claude Code for VS Code: Now GA. Inline diffs, real-time edit visualization, session teleportation across devices. Terminal assistant to IDE-native pair programmer.

📊 Benchmarking

LMArena Video Arena (live): Text-to-video and image-to-video battles with 15 frontier models (Veo 3, Sora 2, Kling 2.6 Pro). Same blind comparison methodology as text arena. Video benchmarking just got serious!

⚡ Hardware & Energy

OpenAI hardware: Confirmed at Davos that the first device ships H2 2026.

Apple AI pin: Yet another wearable pin! We continue our trend of moving AI from screens to the physical world. The graveyard of AI pins (RIP Humane) doesn’t seem to be deterring anyone.

SpaceX orbital data centers: Space-based AI! Elon Musk says it’s conceptual, but the thesis – avoid Earth-based political resistance, tap abundant solar – is real. Sounds insane until you remember compute demand is outpacing terrestrial energy. Tbh this is my personal favorite of the week: space + energy + AI, oh my!

Satya Nadella on energy: “The countries that can produce energy at the lowest cost will have a significant advantage in the AI race.” Europe’s high prices = strategic liability. As a reminder, Microsoft is spending ~$80B on data centers in 2025.

🔥 Quick Hits

Meta’s new AI team delivered first internal models. CTO Andrew Bosworth says they show “a lot of promise” but that significant post-training work remains. Next two years are make-or-break.

Liquid AI’s 1.2B reasoning model runs fully on-device. Small models, local inference, the edge keeps getting smarter.

Gemini in Chrome getting “skills” – a dedicated interface where users teach AI specific workflows. Chatbots evolve to browser agents!

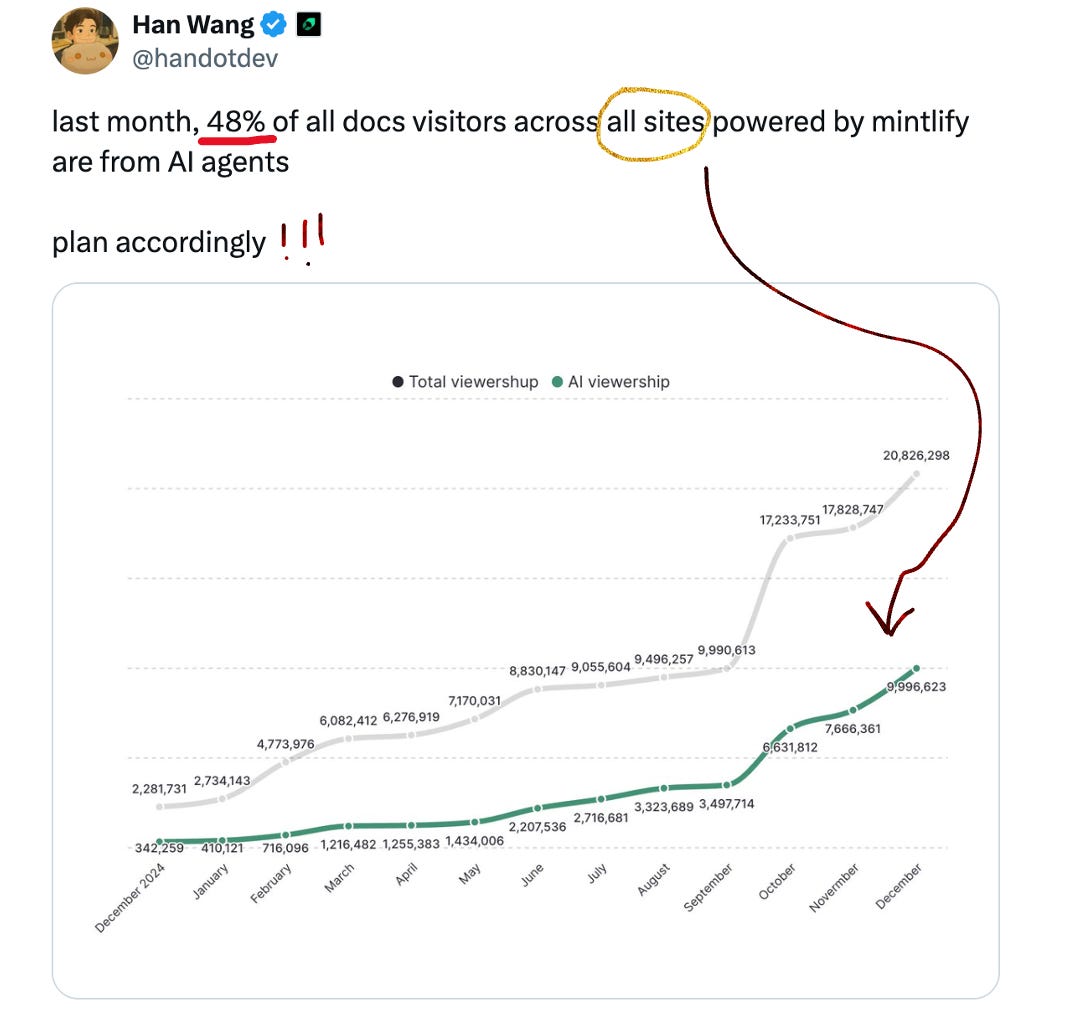

Mintlify data: 48% of their docs traffic is now AI agents, not humans. Your documentation’s biggest audience might already be machines:

Plan accordingly, indeed!

🔮 The Through Line

Davos 2026: the AI CEOs stopped selling dreams on the world stage, and started issuing warnings. That’s new.

The labs are diverging fast. Anthropic’s building philosophical frameworks while shipping vertical products. OpenAI’s becoming AI-native Accenture. Google’s betting that if AI is the interface layer, they already have the distribution.

Meanwhile, the models keep commoditizing. The window for defensible AI businesses is narrowing. Moats better be deep.

‘Til next week,

Jess ✌️

Loved this Jess

Great inside view of the growing dynamics and conflicts of the race to AI domination Jess. thank you.