Seven Shifts

How AI Reshapes Computing in 2025

The past year has seen AI transition from emerging technology to practical tool, with foundation models becoming increasingly woven into our everyday lives. Following last month’s post on the “magnificent seven” AI breakthroughs of 2024, here are my predictions for how AI will fundamentally reshape computing in 2025. We're moving beyond the initial wave of AI adoption into a period of reinvention – of our software, our infrastructure, and even our basic computing paradigms.

1. The Second Wave of AI Infrastructure

The first wave of AI Infrastructure focused on prompt engineering and adapting existing systems for generative AI, such as vector databases and traditional security solutions. Now, with real production deployments revealing clear pain points around scalability, security, and reliability, a second wave of AI infrastructure is emerging. This includes:

Secure Access to LLMs: Using private clouds and on-device AI processing not just for enhanced security, but to dramatically reduce latency and costs at scale.

Agent-Specific Infrastructure: Developing specialized memory systems that can efficiently manage context windows, new payment protocols for agent-to-agent services, and robust authentication mechanisms for verifying agent behaviors.

Enhanced Foundation Tools: Advancing vector databases with native support for embedding evolution, building multi-agent orchestration frameworks that can handle complex dependencies, and developing search optimization specifically for LLM interaction patterns.

2. OS for Agents

The atomization of software into agent-driven microservices is driving a fundamental rethinking of how we manage AI systems at scale. By 2025, we'll see the emergence of standardized frameworks for managing AI workloads - think container orchestration but specifically designed for handling model deployment, agent coordination, and memory management across large fleets of AI services. Picture spinning up a customer service agent that automatically coordinates with inventory management and logistics agents as seamlessly as Unix processes communicate today. Key capabilities will include tensor-optimized resource allocation, embedding space management, and inter-agent communication protocols. This standardization lays the groundwork for true AI-native operating systems beyond 2025, where these patterns will evolve into lower-level primitives handled at the OS level.

3. Simulating Success: How Simulative Environments Enhance AI Reliability

Simulative environments will emerge as the next iteration of AI evaluations. Kudos to Latent Space for early insights (check out their Summer of Simulative AI episode). Unlike traditional testing approaches that rely on static test cases, simulative AI creates dynamic environments where AI systems can be tested in realistic scenarios - think of it like a sophisticated game engine for testing AI applications. Want to test how your customer service AI handles angry customers? Create a simulated environment with varying customer personalities. Developing an AI trading system? Simulate different market conditions and stress test your algorithms. These environments provide continuous feedback loops for both testing and training, helping identify failure modes before they impact real users. By 2025, expect simulative AI to become a standard part of the AI development toolkit, much like CI/CD pipelines are for traditional software development.

4. World Models: Digital Twins Grow Up

Large world models represent the next evolution from digital twins, expanding beyond static, system-specific replicas into dynamic, generative simulations of entire environments. While digital twins mirror physical systems for monitoring and optimization, large world models, like Google’s Genie 2 and Fei-Fei Li’s World Labs, leverage deep learning to generate, interact with, and reason about virtual worlds. This shift enables broader adaptability, allowing these models to simulate counterfactuals, create entirely new 3D environments, and serve as foundation models for spatial intelligence. The result is a more flexible, scalable toolset that opens up possibilities across robotics, AR/VR, autonomous systems, and industrial simulations. In 2025, we’ll see the first wave of these models drive breakthroughs in physical-world AI applications.

5. Less Clicks, More Flow: The Evolution of Software Experience

While current AI software still resembles traditional SaaS with chatbot interfaces, the next wave of AI-native startups will fundamentally redesign software to prioritize multi-agent orchestration and human-agent collaboration – think event streams and declarative goals rather than dropdown menus and clicks. I’ve previously written about how autonomous agents will drive a shift towards modular architectures instead of traditional monolithic platforms (Databricks agrees!). The software experience itself must evolve – today's SaaS platforms are designed for human point-and-click workflows, but tomorrow's enterprise will have AI coworkers that consume and act on information differently. Instead of humans navigating through menus and forms, we'll express high-level intentions that AI agents translate into complex workflows, handling the tedious middle steps automatically. Expect a complete reimagining of the ubiquitous SaaS app.

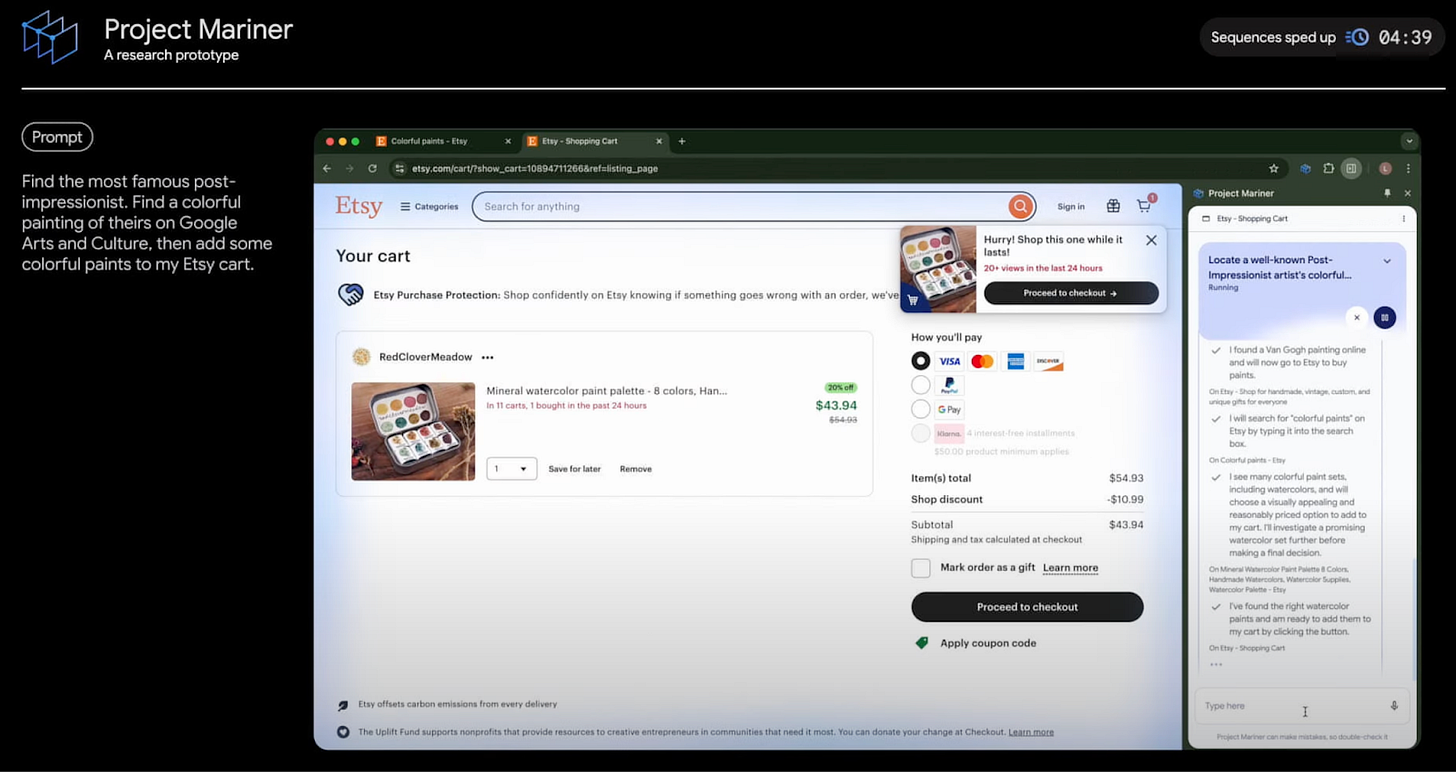

6. AI Gets Hands-On: The Rise of Computer Control

Anthropic's Claude Computer Use and Google's Project Mariner represent a fundamental shift in AI capability: direct computer interaction through visual reasoning models and action planning systems. While current implementations feel experimental, they're laying the groundwork for a new paradigm where AI serves as an intelligent intermediary between natural language intent and system execution. This bridges a crucial gap – enabling non-technical users to express complex workflows in natural language while the AI handles the technical implementation. The result will transform how we interact with digital systems, making sophisticated automation accessible to everyone.

7. Beyond Chat: The Multi-Sensory AI Interface

We’ll continue to see advancements in the human-AI interface. OpenAI’s advanced voice mode now comes with both video and screen sharing so the AI can see what you see; and Google’s Project Astra also allows users to speak or share live video to interact with the assistant. While phones remain the dominant medium for AI interaction, the next wave of interfaces will leverage ambient computing paradigms – smart watches evolving from notification devices to context-aware AI interfaces, and AR glasses may finally find their killer app in AI-enhanced reality. The key breakthrough needed isn't just miniaturization, but the development of natural interaction patterns that make AI feel like a seamless part of our environment.

Looking Ahead: The AI-Native Future

These seven shifts point to a clear direction: we're moving from adapting existing systems to work with AI, toward building new systems that are AI-native from the ground up. This transformation will touch every layer of the computing stack, from how we design interfaces to how we manage computational resources. 2025 will be the year when AI stops being a feature and becomes the foundation of how we build and interact with software.