From Code Red to Code Shipped

GPT-5.2 lands, Disney writes a $1B check, and yes – I have thoughts on the naming convention 🧄

Last week I wrote about OpenAI’s “code red.” This week, they answered the call. AND I HAVE MANY THOUGHTS!

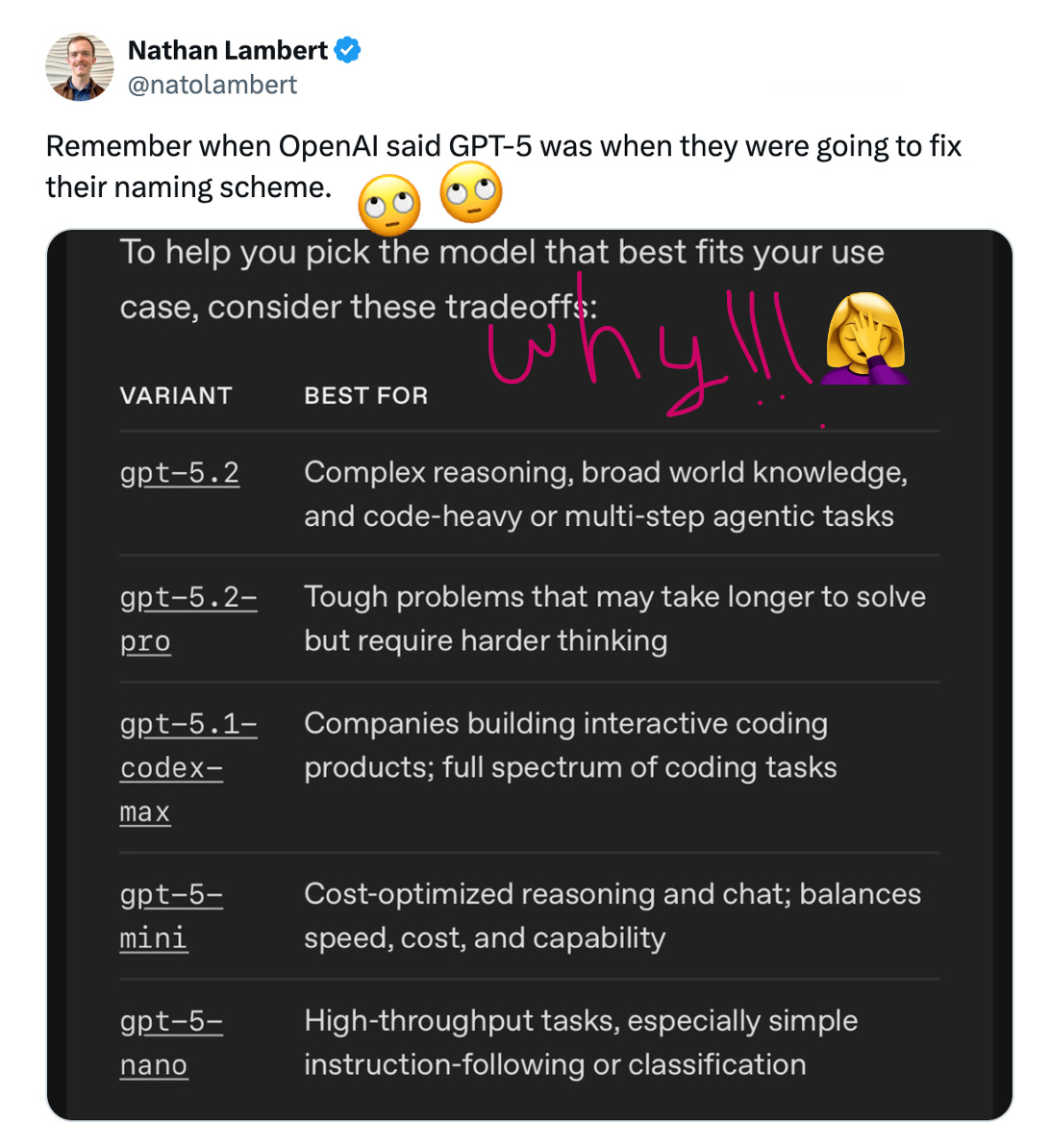

GPT-5.2 has been released (hooray!). The model, as I mentioned last week, was internally known as Garlic. Accompanying the release, OpenAI has helpfully released this lovely looking chart below that toooootally makes the naming conventions super helpful and clear if you’re trying to decide what model to use. (NOT!)

Okay, I know the name shouldn’t bother me so much. It’s an overdone topic! I’m well aware I’m perhaps making a poor choice to harp on it – in the intro of my blog no less! But today, I’m sorry, a diatribe *is* coming.

I promise, I promise, I will write about the substantive stuff too. I’ve never let you down, have I, dearest reader? Stick with me, okay? Okay?? I mean, sure, fine – you don’t actually have to. You can go straight to the first section. Ctrl+F ‘“Code Red” Response’ and I’ll see you there, if you must. It’s fine. It’s fine! You’ll miss a good laugh, but it’s all good, we can still be friends! 🤗

Ok, still with me? Ok good, because I can’t help myself!!! It bothers me!!! I would like to know WHO is coming up with these codenames. Let it be known that when I was at Palantir and we were working on a big new platform release for the Gotham product, we decided the naming convention for all future releases would be Saturn’s moons. Yes, I know “Titan” would be a trite name today for a brand new release, but in 2019, it was cool!!! It felt tech-y!!! And vibe-y! I’d get up in the morning and say YEA! Hello mother freaking world! I’m working on Titan today!!! 🚀🚀🚀

WHO gets up in the morning thrilled to work on Garlic and Shallotpeat? Like mmmm, yay, alliums!?? Are they all walking around the OpenAI office saying “YES CHEF” every time Sam Altman tells the research team it’s code red? Should code red have actually been called code marinara? SO MANY QUESTIONS.

Alrighty, that’s quite enough of that. /endrant

Lots of other notable business deals in AI land this week. Many of them involving OpenAI! Guess who’s back, baby! Disney wrote them a billion-dollar check (boohoo to Google, and what a coup for OpenAI). Three former public company CEOs are now running OpenAI’s business operations - I think they are taking that enterprise reversal with Anthropic quite seriously. And somehow, in the middle of all this chaos, the entire AI industry – Anthropic, OpenAI, Google, Microsoft – agreed on how agents should actually talk to each other.

Oh, and underlying it all, the US power grid is buckling under $400 billion in data center commitments. That part’s not good news.

Let’s get into it:

📋 This Week’s Cheat Sheet:

GPT-5.2 ships – 400K context, 80% SWE-bench Verified, enterprise-first positioning. OpenAI says it’s ready to exit “code red” by January. We shall see!

Disney invests $1B in OpenAI – 200+ characters licensed to Sora. Mickey Mouse in AI-generated videos. Exhibit A) Hollywood capitulates. Exhibit B) Google is feeling real left out right now!

Agentic AI Foundation launches – Anthropic, OpenAI, Google, Microsoft agree on agent standards. This is huge!!! It’s so nice when we are all friends!!!

Power grid crisis – US added 51 GW last year vs China’s 429 GW. Data centers sitting idle for lack of electricity. This is the real infra we need to solve!

OpenAI’s CEO hiring spree – Three public company CEOs now run OpenAI’s business. Pattern worth watching for sure. Dario isn’t going to sleep so well at night now. 🙅♂️

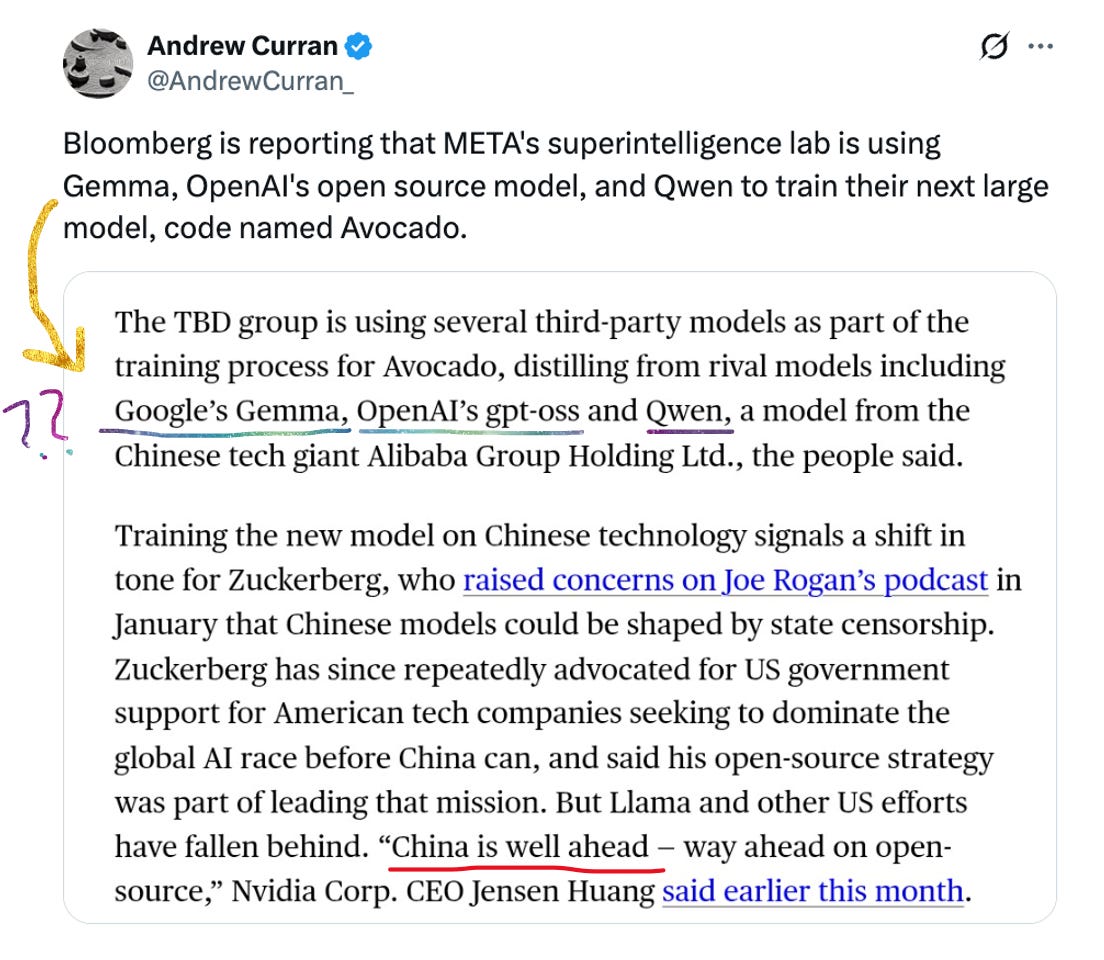

Meta pivots away from open source – “Avocado” replaces Llamas. Internal confusion ensues. (WHAT IS IT WITH ALL THE FOOD NAMING CONVENTION!) (at least avocados are yummier than shallots) (though I do like a shallot) (ok, I’ll stop)

🔥 GPT-5.2: OpenAI’s “Code Red” Response

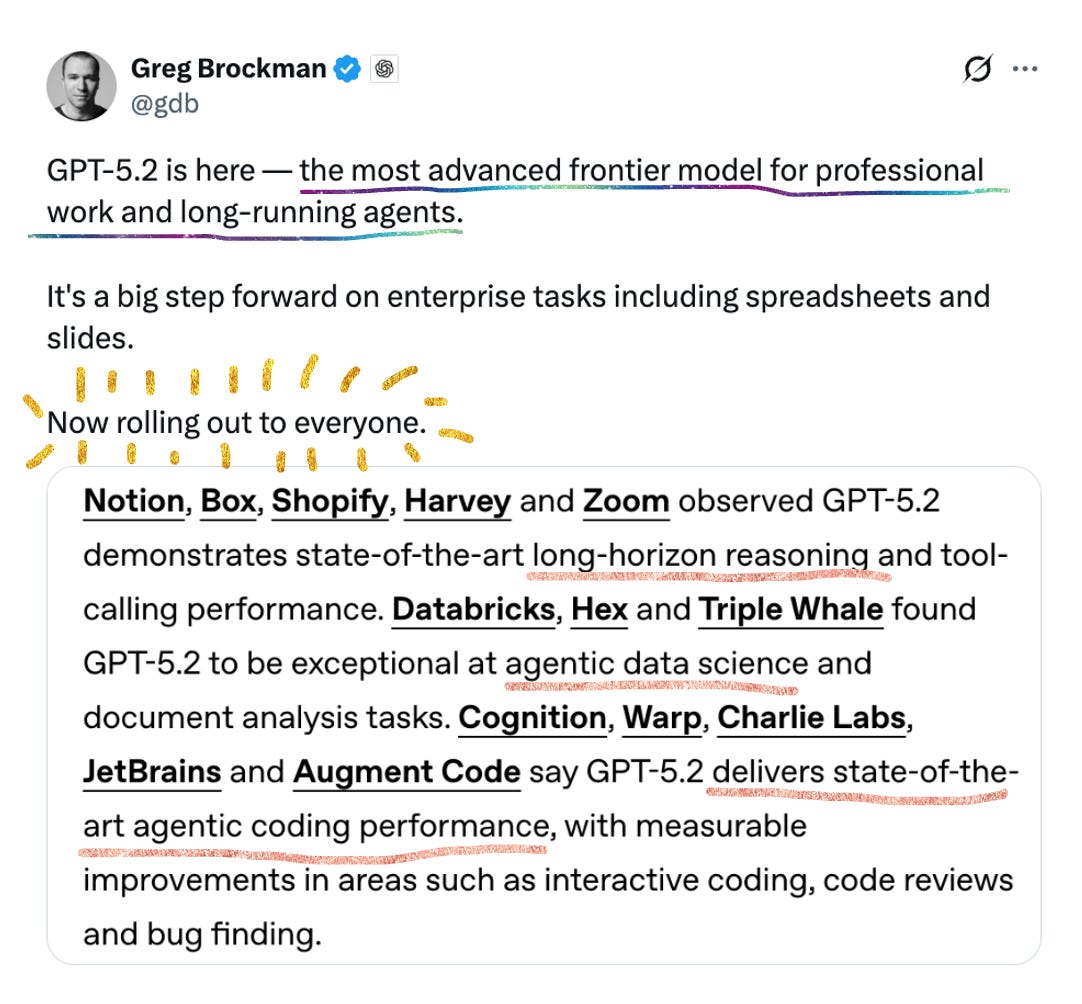

As promised, OpenAI released GPT-5.2 (yes, Garlic 🙄), and the messaging is clear: this is an enterprise play.

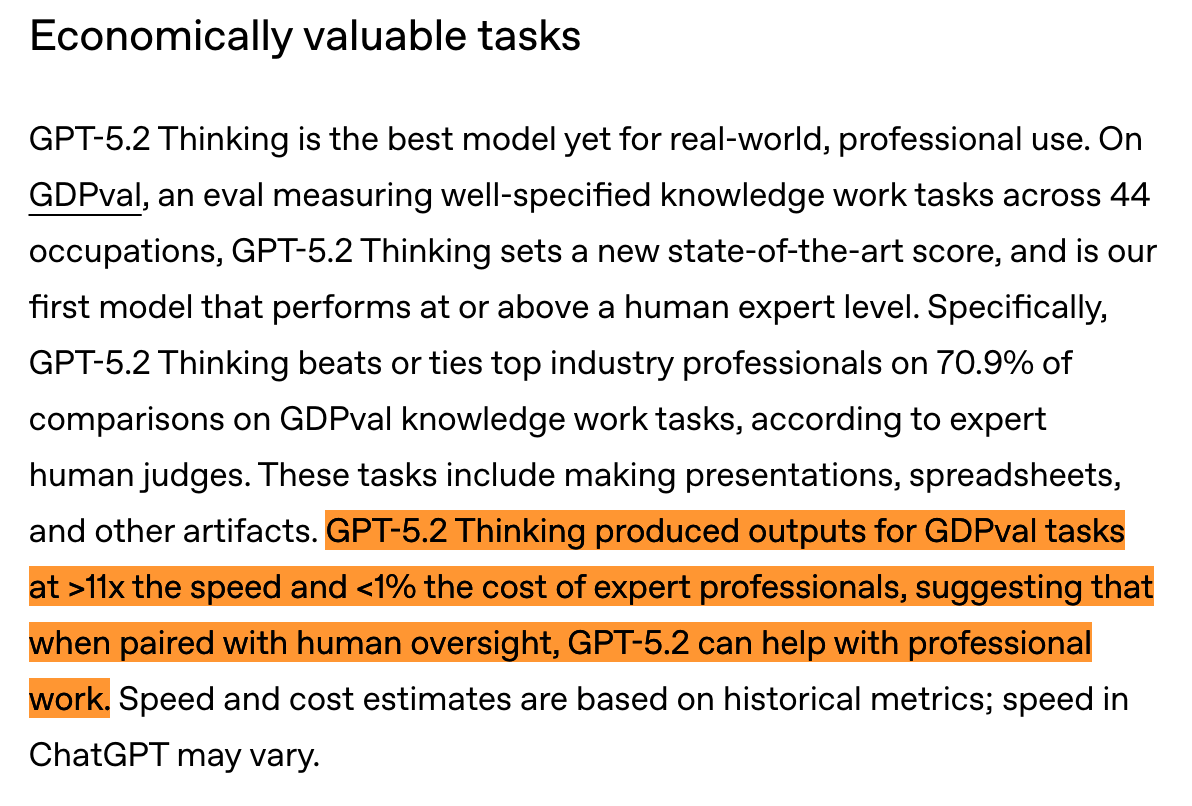

The specs are impressive: 400,000-token context window, 128K max output, 80% on SWE-bench Verified, and their (very fascinating / AGI-watch-esque) GDPval benchmark showing the model “beats or ties top industry professionals on 70.9% of comparisons across 44 occupations.”

Three flavors for 5.2:

Instant – speed-optimized for routine queries

Thinking – complex reasoning, coding, long documents

Pro – maximum accuracy for hard problems

The pitch is unapologetically enterprise. Greg Brockman called it “the most advanced frontier model for professional work.” The announcement leads with time savings: 40-60 minutes/day for average users, 10+ hours/week for heavy users.

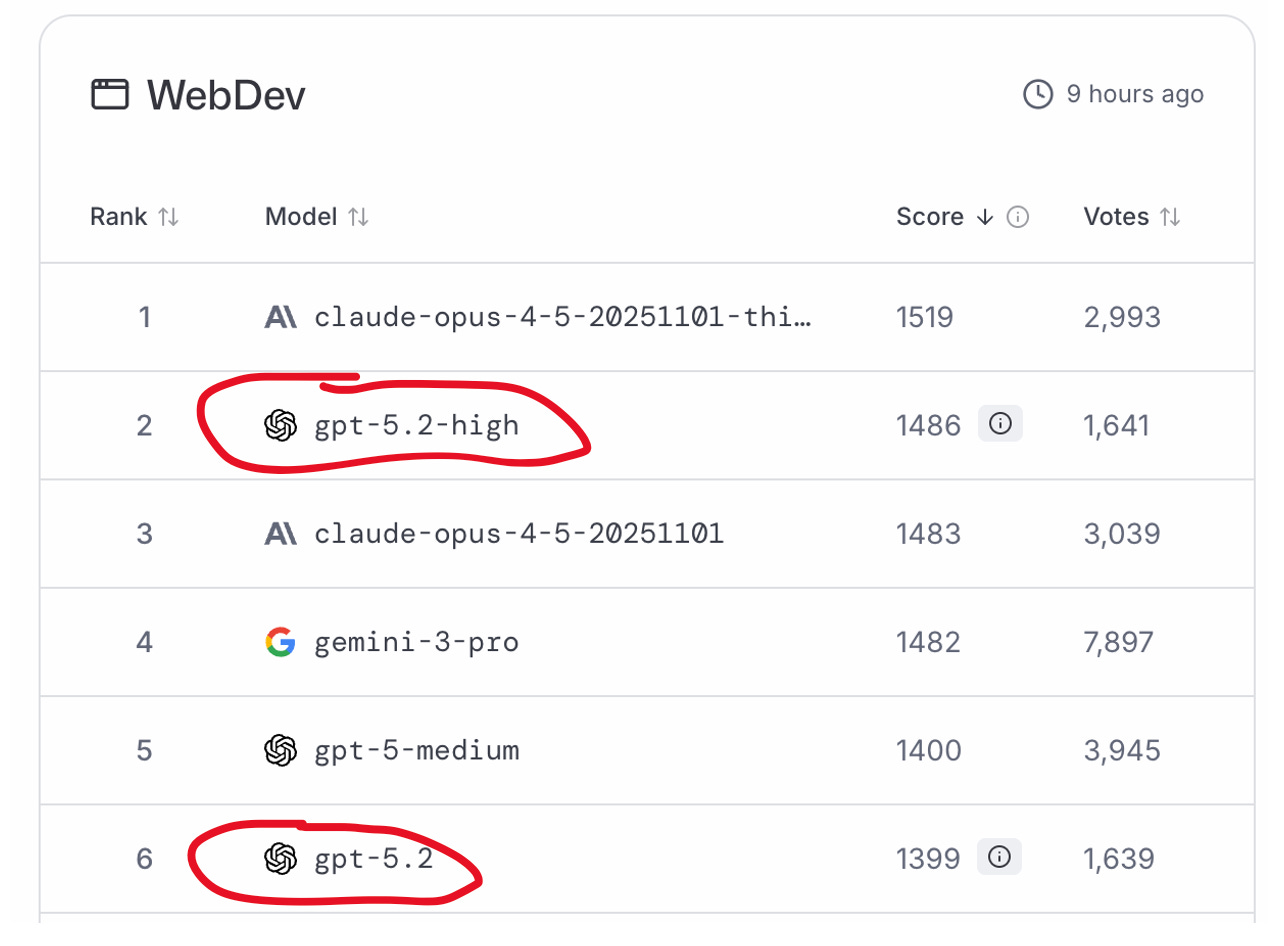

Benchmarks (from OpenAI’s chart) claim GPT-5.2 Thinking edges out both Gemini 3 and Claude Opus 4.5 across SWE-Bench Pro, GPQA Diamond, and the ARC-AGI suites. Though LMArena (preliminarily) still puts 5.2-high in #2 position on the WebDev leadership, still behind Anthropic:

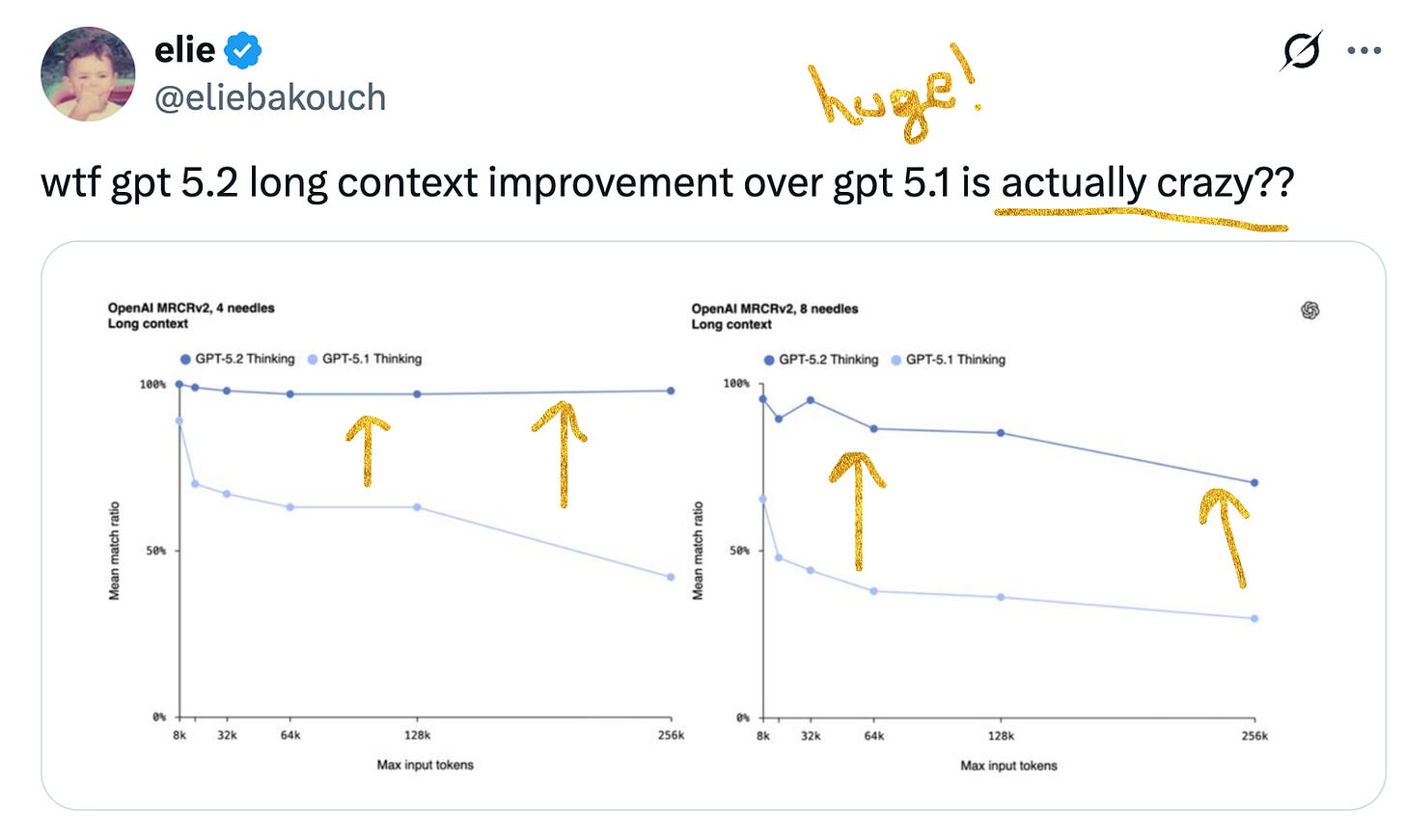

One extremely impressive improvement vs. 5.1 is around long context – I mean look at that chart jump!

Some interesting behind-the-scenes chatter: OpenAI reportedly overruled some employees who wanted to delay the release for more polish. Though Fidji Simo pushed back on the “rushed” narrative, claiming GPT-5.2 was “in the works for many, many months” and the code red “helped focus the company” but wasn’t the reason it shipped this week. (Yaa ok, but I still think it was dat Code Marinara that made them do it!)

The bigger picture: OpenAI has committed $1.4 trillion (yes, trillion) to AI infrastructure buildouts over the next few years. Those commitments were made when they had first-mover advantage. Regardless of how much of an improvement 5.2 was from 5.1, the narrative now feels that they’re seemingly having to play some catch-up. The stakes have never been higher.

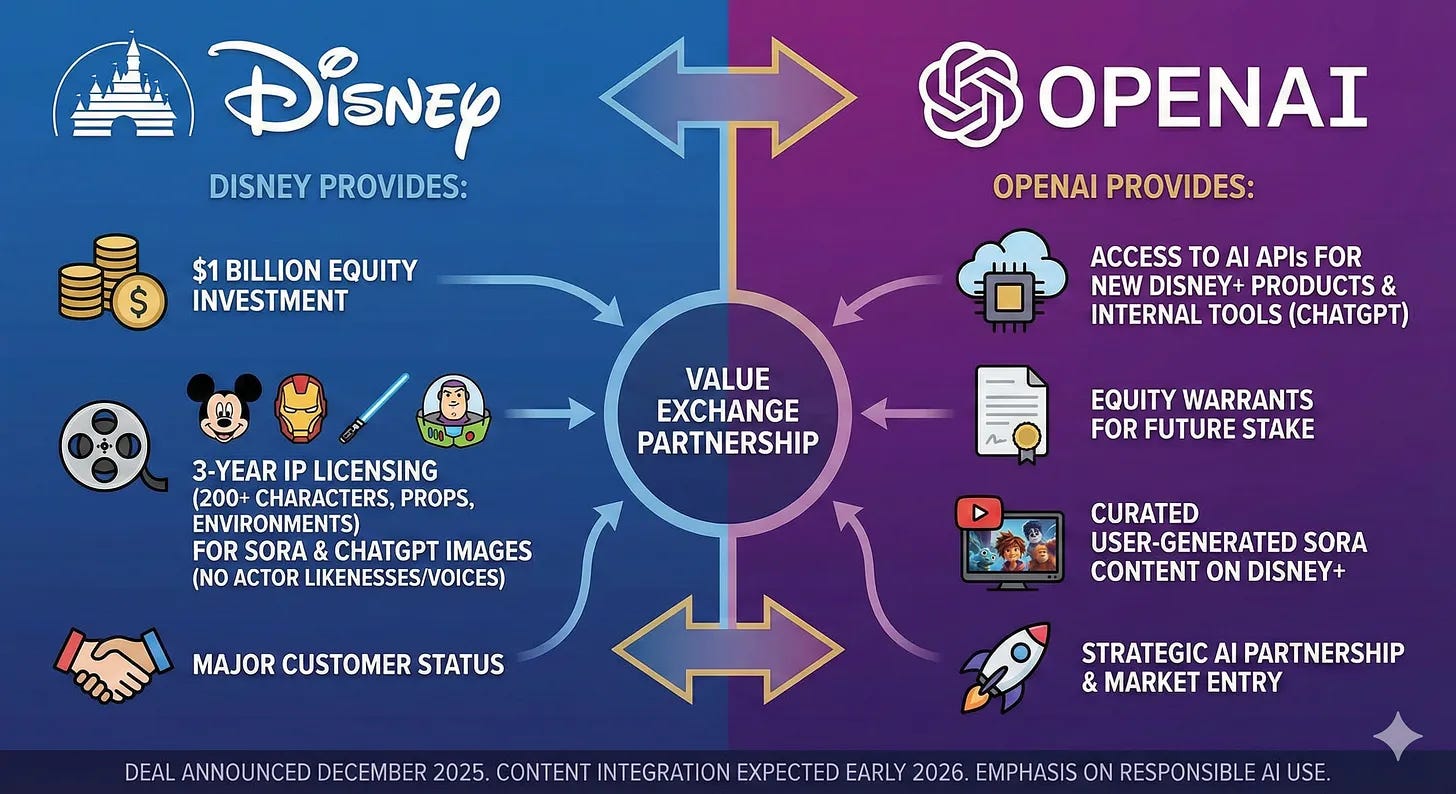

🏰 Disney Just Changed the (Character) Game

Disney is investing $1 billion in OpenAI and licensing 200+ characters to Sora.

Read that again. Mickey Mouse. Darth Vader. Iron Man. Elsa. All available for user-generated AI video content.

And you know who does NOT have licensing? Google. 😢 Early YouTube licensing agreements are not saving them, and Disney has explicitly called Google out within a day of it announcing the OpenAI partnership:

The three-year deal makes Disney the first major content licensor on Sora. Users will be able to create short-form videos with Disney, Marvel, Pixar, and Star Wars characters starting early 2026. The agreement doesn’t include talent likenesses or voices (so no Tom Hanks Woody), but covers costumes, props, vehicles, and “iconic environments.”

Some context on why this matters:

Two months ago: CAA called Sora “exploitation, not innovation.” WME opted out all clients. Studios were threatening lawsuits.

Wednesday: Disney sent Google a cease-and-desist for AI character infringement

Thursday: Disney announces billion-dollar OpenAI partnership

🔄 The flip is extraordinary. Disney is betting that monetizing through licensing beats fighting a losing legal battle. If you can’t stop AI-generated Darth Vader content, at least get paid for it.

The deal also makes Disney a “major customer” of OpenAI – ChatGPT deployed for employees, APIs used for Disney+ experiences, and curated Sora videos streaming on the platform.

Sam Altman called Disney “the global gold standard for storytelling.” Bob Iger stressed this “does not represent a threat to creators.”

Whether creators agree remains to be seen. 🙃

🤝 The Agentic AI Foundation: Everyone Agrees (Finally)

Anthropic, OpenAI, Google, Microsoft, and others are launching the Agentic Artificial Intelligence Foundation – an industry group to standardize how AI agents work together.

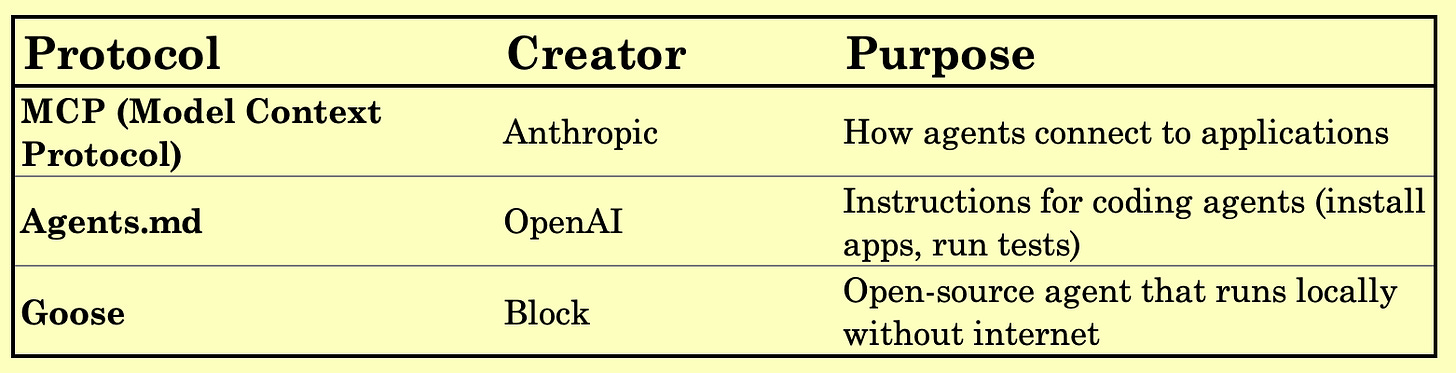

The Linux Foundation is organizing it. The analogy is interbank electronic payments: different banks, same protocol. The group will focus on three existing open-source tools:

This is a big deal!! These companies are fierce competitors, and MCP alone has 97M monthly SDK downloads. Standardization means agents from different providers can actually talk to each other and plug into the same tools.

Google doubled down this week by launching managed MCP servers that make Maps, BigQuery, and other Google/Cloud services “agent-ready by design.”

The subtext: whoever wins the agent wars won’t be the company with the best model – it’ll be the one whose agents can actually do things in the real world. And that requires standards.

⚡ The Grid Is the Bottleneck

FT released a sobering report this week: The power crunch threatening America’s AI ambitions.

The numbers are sobering:

US added 51 GW of new capacity last year

China added 429 GW (!!!!)

Data centers account for >50% of projected US load growth through 2030

Interconnection queues stretch beyond 8 years in some regions

Hyperscalers have committed over $400 billion to new data centers. Many facilities sit stalled because they can’t get electricity. The grid still runs on infrastructure built during Johnson and Nixon.

The workarounds are expensive and ugly: onsite gas turbines, restarting idled nuclear units, private generation clusters. xAI in Memphis, OpenAI in Abilene, Meta in Ohio – all building their own power solutions because the grid can’t deliver.

⚡⚡ Why it matters: America has staked its economic and geopolitical edge on AI, but the infrastructure beneath that ambition is buckling. A persistent power shortfall delays model training, slows product roadmaps, and risks ceding advantage to China, where rapid grid expansion directly supports national AI goals.

It’s good for everyone to remember that AI is not just about hype cycles; there’s vert real physics involved. I was excited this week to announce Decibel’s investment in Unconventional AI for precisely this reason. 🚀 We need to reimagine what AI training looks like, or we might be in serious trouble!

🦙→🥑 Meta’s Open Source Confusion

Bloomberg has the goods on Meta’s strategy pivot: From Llamas to Avocados.

The once-confident open-source playbook has splintered into a “costly, high-pressure scramble” around a new frontier model codenamed “Avocado.” Teams are strained. Investors want coherence. The Behemoth model never shipped. And the pivot from open-source champion to closed-model competitor is... confusing everyone. Especially since now they are apparently distilling models?! 🤔

Meanwhile, in other AI strategy news, Meta acquired Limitless (fka Rewind) this week – the AI pendant startup that records conversations. The team will support existing AR/AI wearables rather than launch new products. RIP to this 2022 tweet from the CEO:

Let this be a good reminder not to make promises you can’t keep. Nothing wrong with being acquired, btw!!!! In fact, often a very great thing! But rough to say this publicly, then have this receipt online when you, in fact, get acquired.

👔 OpenAI’s Public Company CEO Pipeline

OpenAI announced Denise Dresser (Slack CEO) as their new Chief Revenue Officer this week. But zoom out and you’ll notice a pattern: this is the third public company CEO they’ve recruited into leadership in the past year.

What’s happening here? A few reads:

➡️ The IPO prep theory: You don’t hire three public company CEOs unless you’re thinking about becoming one. Sarah Friar took Nextdoor public. Fidji Simo took Instacart public. Slack was public before Salesforce acquired it. This is a team that knows what Wall Street wants.

➡️ The “Sam is stepping back” theory: Back when Fidji was hired, The Information reported that Altman has told people he’s “not interested in continuing to run all of OpenAI for much longer.” Fidji Simo now oversees COO Brad Lightcap, CFO Sarah Friar, and CPO Kevin Weil. That’s... most of the company. The gossip mill says Sam is increasingly focused on research, compute, and the Jony Ive hardware project. (Makes sense - running businesses is way less fun than building products!! - a startup investor)

➡️ The advertising theory: Fidji built Facebook’s advertising platform and turned Instacart into one of the biggest retail ad businesses outside Amazon. Denise Dresser ran Slack’s enterprise sales machine. OpenAI has been experimenting with shopping features and ads. These aren’t random hires.

Whatever the reason, the message is clear: OpenAI is no longer a research lab pretending to be a company. They’re building the management bench of a Fortune 500.

🔬 Bits & Bobs

🤖 Model Releases

Essential AI Labs releases Rnj-1 – The Transformer paper authors (Ashish Vaswani and Niki Parmar) released their first model: an 8B-parameter open-source system claiming “frontier-matching” abilities in coding, math, and agentic reasoning.

Mistral Devstral – France’s AI champion launched their first autonomous coding agent. The “sovereign AI” angle is increasingly real – European enterprises that want frontier capabilities without US or Chinese entanglements now have an option. Whether Mistral can actually compete on capabilities is a different question, but the positioning is smart.

OpenAI’s next models spotted in testing: Image-2 (Chestnut), Image-2-mini (Hazelnut). Someone at OpenAI REALLY loves food names. Good God!

🌍 Geo(politics)

Trump approves Nvidia H200 sales to China – With a 25% cut to the US government. Jensen lobbied directly. The geopolitical implications are significant: this reverses the direction of recent export controls and suggests the administration is prioritizing revenue over containment. The 25% “tax” is novel – essentially treating chip exports as a trade revenue stream rather than a national security question.

Google DeepMind UK Lab – New materials discovery research facility as part of Alphabet’s UK government partnership. This is the automated research lab angle – using AI to discover novel materials for medical imaging, solar panels, and chips. The UK is positioning itself as the “AI safety and science” hub, and Google is happy to play along for regulatory goodwill.

💼 Enterprise News

OpenAI State of Enterprise AI Report – Worth reading. Enterprise usage surged dramatically over the past year. This is their pitch deck for GPT-5.2’s enterprise positioning.

Anthropic + Accenture – Partnership announced targeting business clients. We love how the FDE mimic machine keeps rolling.

🤔 The “I Have No Idea Where to Categorize These” Section

Time Person of the Year: “The Architects of AI” – The cover recreates the famous 1932 “Lunch atop a Skyscraper” photo with eight AI leaders, from Zuckerberg to Fei-Fei Li. The headline is right: 2025 was the year AI stopped being about the future and became about the present.

The Grok 4.20 trading competition tweet – This is wild. Grok’s edge apparently came from “Situational Awareness mode” that monitored competitor positions in real-time, plus direct access to the X Firehose processing ~68 million tweets/day for ultra-short-term signals. Whether this is impressive or terrifying depends on your priors about AI trading systems.

🔮 So What Does This All Mean?

Three threads worth pulling:

1. OpenAI is building for the public markets, not just the product market.

Three public company CEOs in leadership. A $1B strategic investment from Disney. An enterprise-first model positioning. This is a company preparing for an IPO, perhaps (?) even racing Anthropic to get there. The code red response clearly wasn’t solely about shipping a model. It’s an entire mindset shift – enterprise-ready, #1.

2. Hollywood just set the template for how IP owners engage with AI.

Disney’s move is capitulation dressed up as partnership. Two months ago, the major agencies were calling Sora “exploitation, not innovation.” Wednesday, Disney sent Google a cease-and-desist. Thursday, Disney announced a billion-dollar OpenAI deal. No shade there on my part – if you can’t beat ‘em, join ‘em.

The calculus is clear: if you can’t stop AI-generated content featuring your IP, monetize it through licensing and maintain some control. Expect other studios to follow. The question now is what this means for talent – the deal explicitly excludes “likenesses and voices,” but for how long?

3. The agent wars just got standardized.

The Agentic AI Foundation is the most consequential announcement this week that we don’t seem to be talking enough about. When Anthropic, OpenAI, Google, and Microsoft agree on protocols, it’s good news for anyone building agents. Last-mile reliability has continued to plague many-a-pilots, and the hope is this helps agents evolve into tools that can interact with real systems at scale.

MCP alone has 97M monthly SDK downloads. Google launched managed MCP servers this week to make Maps and BigQuery “agent-ready by design.” The subtext: whoever wins the agent wars won’t be the company with the best model – it’ll be the one whose agents can actually do things in the real world. And that, it seems, requires standards.

🫡 ‘til next week friends!

—Jess